If /var/log/journal does not exist, then use /run/log/journal.If /var/log/journal exists, then use /var/log/journal.

If auto is returned, check if /var/log/journal exists.If volatile is returned, your systemdLogPath should be /run/log/journal.

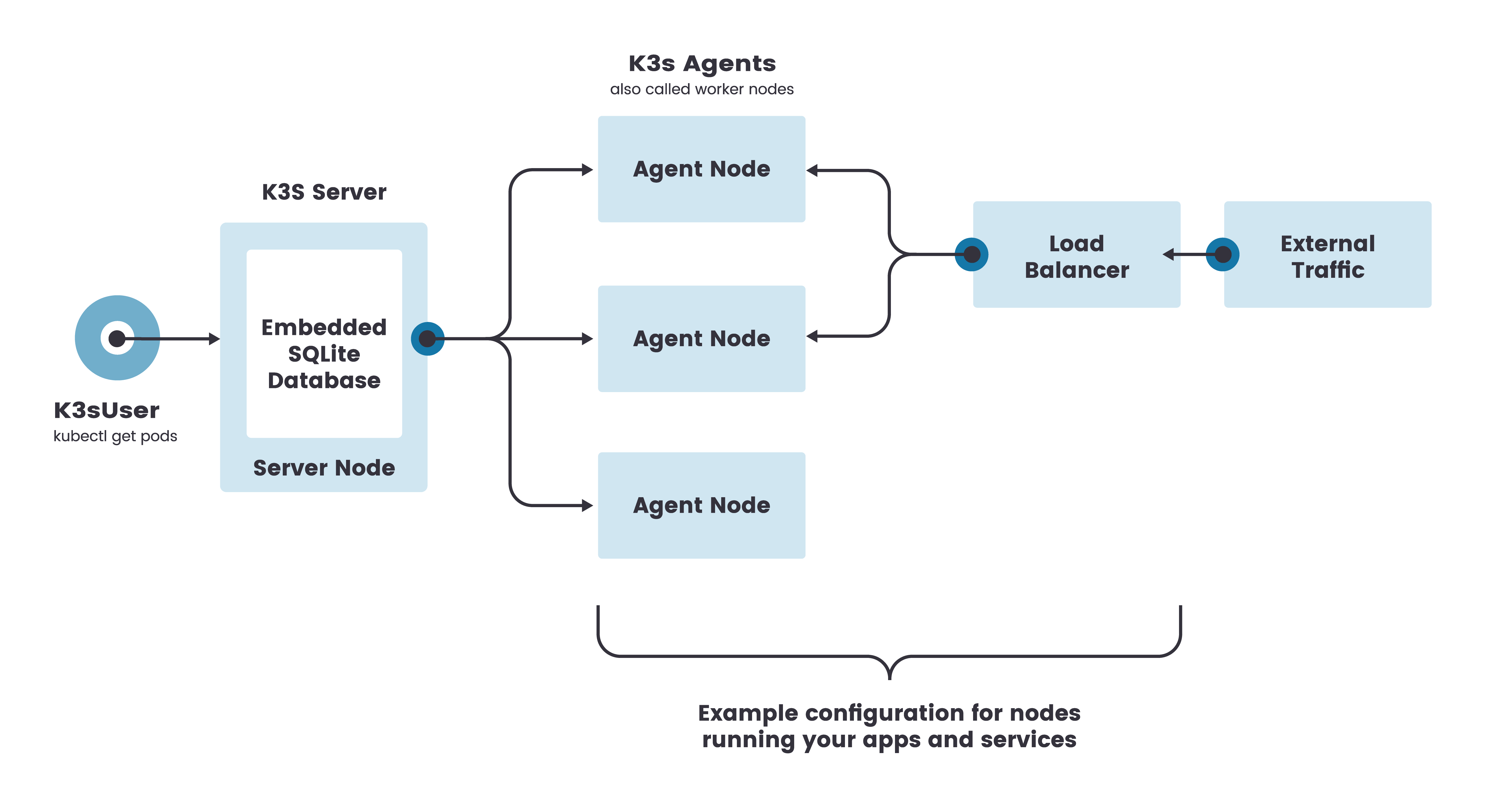

If persistent is returned, your systemdLogPath should be /var/log/journal.Run cat /etc/systemd/nf | grep -E ^\#?Storage | cut -d"=" -f2 on one of your nodes.To determine your systemdLogPath configuration, see steps below. For example, Ubuntu defaults to var/log/journal. While the run/log/journal directory is used by default, some Linux distributions do not default to this path. In order to collect these logs, the systemdLogPath needs to be defined. K3s and RKE2 Kubernetes distributions log to journald, which is the subsystem of systemd that is used for logging. In Rancher logging, SystemdLogPath must be configured for K3s and RKE2 Kubernetes distributions. If you're already using a cloud provider's own logging solution such as AWS CloudWatch or Google Cloud operations suite (formerly Stackdriver), it is not necessary to enable this option as the native solution will have unrestricted access to all logs. When enabled, Rancher collects all additional node and control plane logs the provider has made available, which may vary between providers To enable hosted Kubernetes providers as additional logging sources, enable Enable enhanced cloud provider logging option when installing or upgrading the Logging Helm chart. Linux Nodes (including in Windows cluster) The following table summarizes the sources where additional logs may be collected for each node types: Logging Source In some cases, Rancher may be able to collect additional logs. Additional Logging Sources īy default, Rancher collects logs for control plane components and node components for all cluster types. Then, when installing the logging application, configure the chart to be SELinux aware by changing to true in the values.yaml. To use Logging v2 with SELinux, we recommend installing the rancher-selinux RPM according to these instructions. After being historically used by government agencies, SELinux is now industry standard and is enabled by default on CentOS 7 and 8. Security-Enhanced Linux (SELinux) is a security enhancement to Linux. Another example is that the term node pool in RKE1 is now known as machine pool in RKE2.Logging v2 was tested with SELinux on RHEL/CentOS 7 and 8. The duration shown after Up is the time the container has been running. For example, in RKE1 provisioning, you use node templates in RKE2 provisioning, you can configure your cluster node pools when creating or editing the cluster. There are three specific containers launched on nodes with the controlplane role: kube-apiserver kube-controller-manager kube-scheduler The containers should have status Up. You will notice that some terms have changed or gone away going from RKE1 to RKE2. Users who are used to RKE1 provisioning should take note of this new RKE2 behavior which may be unexpected. When editing the cluster and enabling drain before delete, the existing control plane nodes and worker are deleted and new nodes are created.The following are some specific example configuration changes that may cause the described behavior: Note that for etcd nodes, the same behavior does not apply. This is controlled by CAPI controllers and not by Rancher itself. When you make changes to your cluster configuration in RKE2, this may result in nodes reprovisioning. RKE2/K3s provisioning is built on top of the Cluster API (CAPI) upstream framework which often makes RKE2-provisioned clusters behave differently than RKE1-provisioned clusters. RKE1 with Docker does not allow mirroring. RKE2's container runtime is containerd, which allows things such as mirroring a container image registry. By contrast, RKE2 launches control plane components as static pods that are managed by the kubelet. An HA K3s cluster comprises: Three or more server nodes that will serve the Kubernetes API and run other control plane services.

RKE1 uses Docker for deploying and managing control plane components, and it also uses Docker as the container runtime for Kubernetes. Single server clusters can meet a variety of use cases, but for environments where uptime of the Kubernetes control plane is critical, you can run K3s in an HA configuration. RKE1 and RKE2 have several slight behavioral differences to note, and this page will highlight some of these at a high level. It is considered the next iteration of the Rancher Kubernetes Engine, now known as RKE1. RKE2, also known as RKE Government, is a Kubernetes distribution that focuses on security and compliance for U.S. Behavior Differences Between RKE1 and RKE2

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed